- Blog

- Lightroom vs lightroom classic

- Banania benco

- Papi chulo lorna lyrics english

- Albertsons market roswell nm weekly ad

- Siemens simatic 200

- Maxwell sketchup patcher

- Justin timberlake rihanna rehab

- Ravenfield mods download

- Altium pcb design

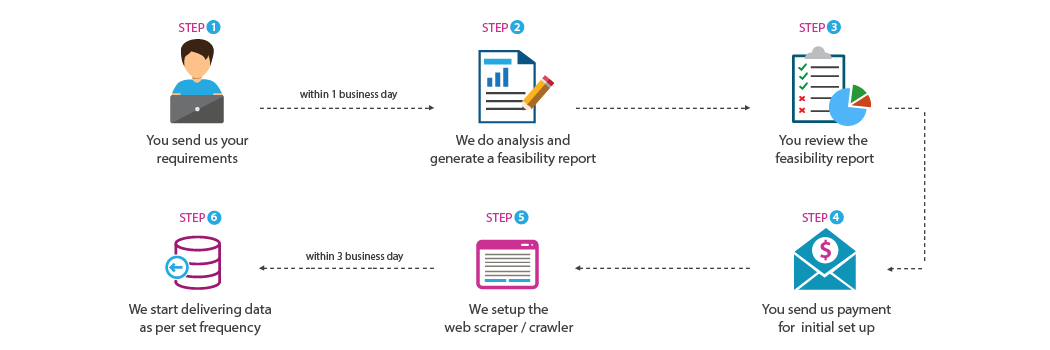

- Building a webscraper

- Miami vice font online creator

- Define share

they integrate with anti-scraping technologies. The most important aspect of building a web scraper is to avoid getting banned! Websites have defensive systems against bots, i.e. Now, let’s take tiny steps towards our end goal. These are but a few of the scenarios and not all challenges may be visible right away. Bare Selenium and Python scripts are of great help if scraping is blocked by the website’s robot.txt.A combination of Selenium and Scrapy is ideal for websites that have fewer details in their URLs and changes data on action events such as clicking drop-downs.Here’s a list of scenarios and possible solutions: Based on the nature of the websites that need to be scraped, the supporting frameworks would change. We recommend using Python as the coding language owing to its large selection of libraries. I have also curated a list of the technology stack that can aid you in building a powerful web scraper. This blog will help you identify and tackle these challenges. In this case, the team came across several problems. Our application needed to scrape product lists and their prices from four different websites. Allows on-the-fly performance measurementīefore we deep dive, a brief background that lists a few ground realities is necessary.Cleans the extracted data and stores it in the desired file format.Sends real-time email notification alerts.Generates precise and detailed logs for analysis.Avoids most of the anti-scraper methodologies.In this guide, we will cover the process of building a web scraper that, I call this art since we often come across challenges that often require out-of-the-box thinking. In our previous blog we defined web scraping is the art and science of acquiring intended data from a targeted website which is publicly available.

- Blog

- Lightroom vs lightroom classic

- Banania benco

- Papi chulo lorna lyrics english

- Albertsons market roswell nm weekly ad

- Siemens simatic 200

- Maxwell sketchup patcher

- Justin timberlake rihanna rehab

- Ravenfield mods download

- Altium pcb design

- Building a webscraper

- Miami vice font online creator

- Define share